CMS Computing Operations

The Computing Operations group (CompOps) is responsible for turning the raw output of the CMS detector into usable scientific data and making that data available to physicists worldwide. It operates the reconstruction and processing infrastructure, manages all data movement and storage across the global CMS computing grid, and maintains the central services that hold the system together.

What CompOps Does

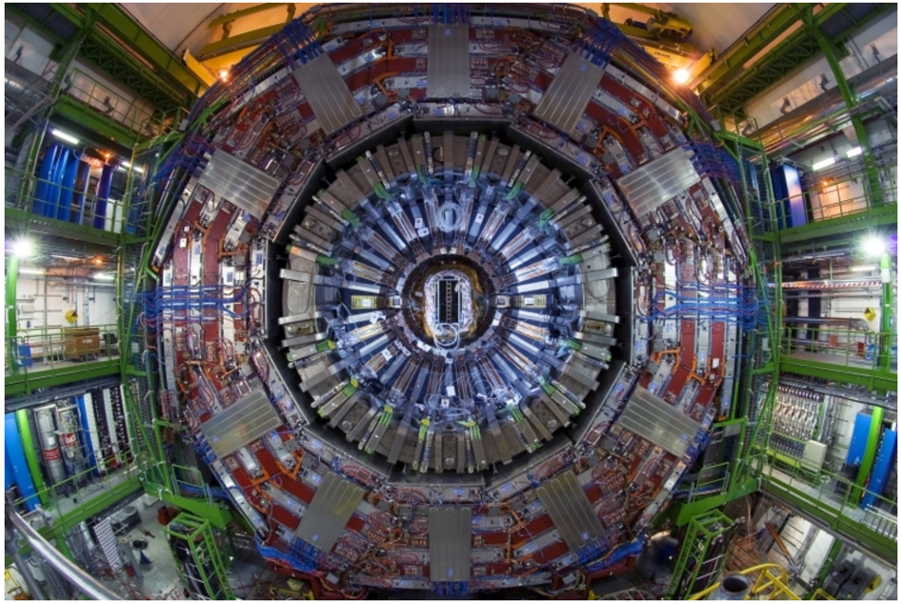

The CMS detector at CERN's Large Hadron Collider produces vast amounts of data during operation. That data must be reconstructed, processed into formats suitable for physics analysis, validated for quality, and distributed to computing centres around the world. CompOps handles this end-to-end chain.

Its responsibilities cover three broad areas: prompt reconstruction of newly acquired data at the Tier-0 facility, large-scale production and reprocessing of simulation and collision datasets across the distributed CMS infrastructure, and management of all data transfers, data placement and storage. The group also coordinates the use of opportunistic resources such as allocations on high-performance computing systems to increase the processing capacity available to CMS.

CompOps carries out this work through three operational groups, each focused on a different stage of the data lifecycle.

Operational Groups

Tier-0

The Tier-0 is the first link between the CMS detector and the offline computing system. When the detector records collision data, the raw output arrives as streamer files at the CERN data centre. The Tier-0 team repacks these into the RAW data format and ensures that at least two independent copies are safely stored on tape at separate sites before any buffer space is reclaimed. This safeguards the data against storage failures and is the single most critical task in CMS computing: all other data formats can be regenerated, but RAW data cannot.

Beyond safe storage, the Tier-0 runs two levels of reconstruction. Express processing produces a rapid first pass within hours of data taking, giving detector experts the calibration and data-quality information they need to keep the detector running correctly. Prompt reconstruction then processes the full dataset as a first complete reconstruction, serving both as an early resource for physics analysis and as a validation that the stored RAW data is intact and usable.

Production and Reprocessing (P&R)

P&R manages the large-scale processing campaigns that produce the simulated and reprocessed datasets CMS physicists use for analysis. This includes Monte Carlo simulation (generating synthetic collision events to compare against real data) and periodic reprocessing of collision data with improved calibrations and reconstruction algorithms.

The team operates the CMS Workflow Management system, which coordinates the distribution of processing tasks across computing centres worldwide. It handles the full lifecycle of each workflow: accepting requests, assigning them to appropriate sites, monitoring execution, recovering from failures and announcing completed datasets to the collaboration.

Key roles within the team include operators who manage day-to-day processing, workflow managers who track requests through the system and campaign managers who coordinate the sequencing and priorities of large production campaigns.

Data Management

Data Management oversees how and where CMS datasets are stored and moved. The global CMS computing infrastructure spans dozens of sites on multiple continents, and keeping the right data at the right place is essential for efficient analysis. The team executes all central data transfers between sites, manages data placement policies and handles data deletion when storage space must be reclaimed.

The group also operates the Any Data, Anytime, Anywhere (AAA) service, which allows physicists to access data remotely without requiring a local copy at their site. It works alongside dynamic data management systems that automatically adjust data placement based on actual usage patterns and available storage capacity.

In the News